MOVEsician

MOVEsician

Movesician is a mixed reality (MR) project that reimagines music creation through play and interaction. Designed for everyone, even non-musicians, it allows users to explore sound through unique scenes that combine visuals, touch, and motion.

Team: Miguel Novelo, Nina Shi, Aimee Liu, Josh He

Year: 2024

MOVEsician

Movesician is a mixed reality (MR) project that reimagines music creation through play and interaction. Designed for everyone, even non-musicians, it allows users to explore sound through unique scenes that combine visuals, touch, and motion.

Team: Miguel Novelo, Nina Shi, Aimee Liu, Josh He

Year: 2024

Our Vision:

Movesician makes music creation accessible and playful, inviting users to explore the boundaries of sound and interaction. Through immersive scenes, we aim to reshape how people engage with music in the digital age.

Are you ready to shape the future of music? Dive into Movesician and explore a world where sound meets creativity.

Our Vision:

Movesician makes music creation accessible and playful, inviting users to explore the boundaries of sound and interaction. Through immersive scenes, we aim to reshape how people engage with music in the digital age.

Are you ready to shape the future of music? Dive into Movesician and explore a world where sound meets creativity.

Onboarding:

Introduce the interface and its role as the gateway to the three scenes.

Onboarding:

Introduce the interface and its role as the gateway to the three scenes.

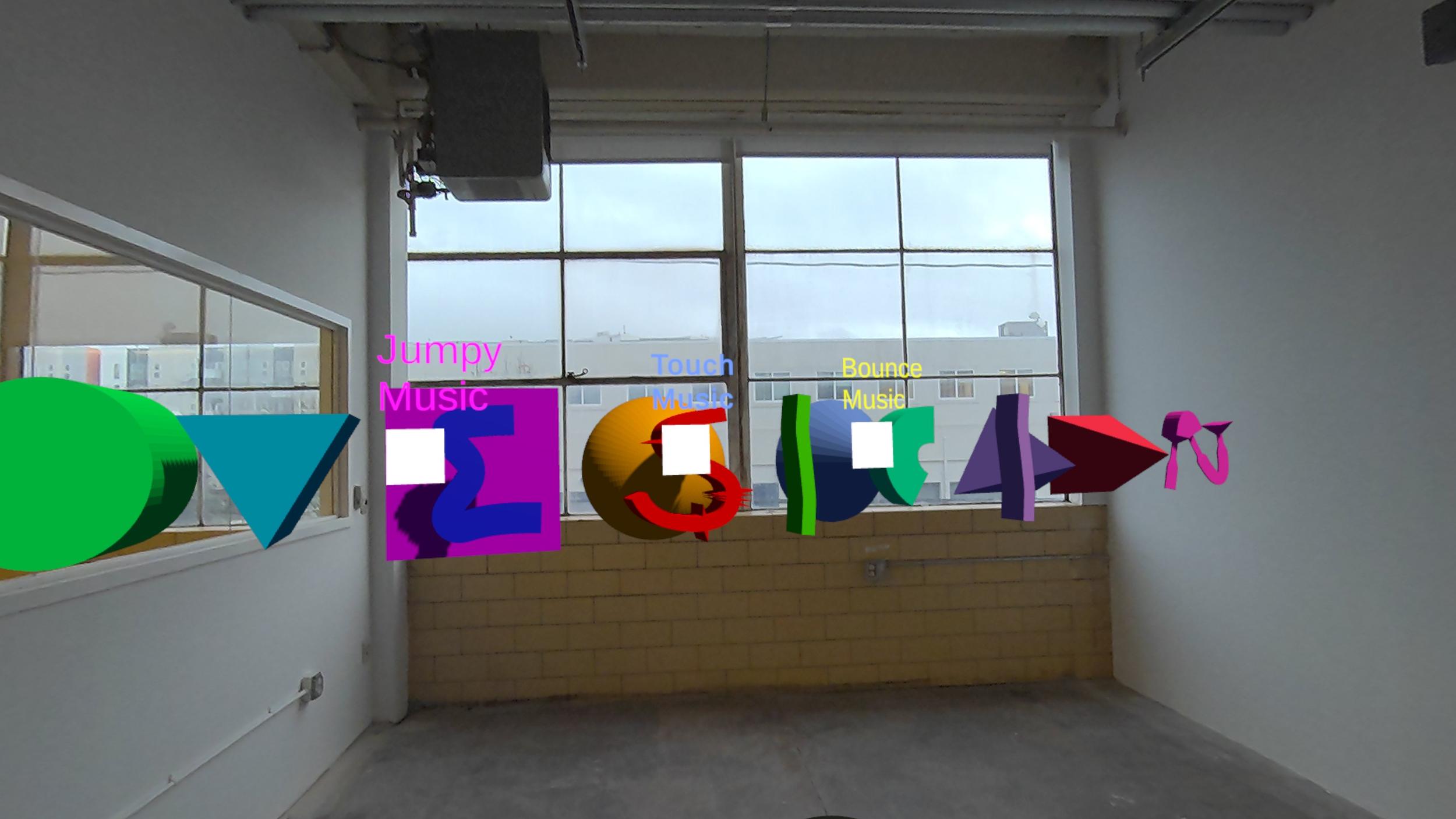

Scene 1: Jumpy Music

Users interact with floating models of the word "Movesician."

Pick up letters to hear their unique sounds.

Place them back to see them bounce, creating a visual and rhythmic effect like waves.

Scene 1: Jumpy Music

Users interact with floating models of the word "Movesician."

Pick up letters to hear their unique sounds.

Place them back to see them bounce, creating a visual and rhythmic effect like waves.

Scene 2: Touch Music

Users build music by stacking cubes, like playing with blocks.

When two cubes touch, they create a sound.

Players can experiment with configurations to build their own music.

Scene 2: Touch Music

Users build music by stacking cubes, like playing with blocks.

When two cubes touch, they create a sound.

Players can experiment with configurations to build their own music.

Scene 3: Bounce Music

Players throw balls into the scene, where they bounce off walls and rebound boards, creating randomized music.

The more balls in motion, the more dynamic the sound.

Scene 3: Bounce Music

Players throw balls into the scene, where they bounce off walls and rebound boards, creating randomized music.

The more balls in motion, the more dynamic the sound.

:D Team

Creators (3D Model & Music Composition): Miguel Novelo

Builders (Game Design & Development): Nina Shi, Aimee Liu, Josh Chen

:D Team

Creators (3D Model & Music Composition): Miguel Novelo

Builders (Game Design & Development): Nina Shi, Aimee Liu, Josh Chen

Challenge(s)

Built under a strict timeline, this was our first MR project without prior coding background. We addressed room scanning, gesture recognition, and deployment challenges by leveraging Meta’s SDK and establishing clear GitHub workflows. The experience strengthened our ability to translate design intent into functioning MR systems while managing technical and collaborative complexity.

Built under a strict timeline, this was our first MR project without prior coding background. We addressed room scanning, gesture recognition, and deployment challenges by leveraging Meta’s SDK and establishing clear GitHub workflows. The experience strengthened our ability to translate design intent into functioning MR systems while managing technical and collaborative complexity.